The public launch of Artsy via the New York Times is a good opportunity to describe our current technology stack.

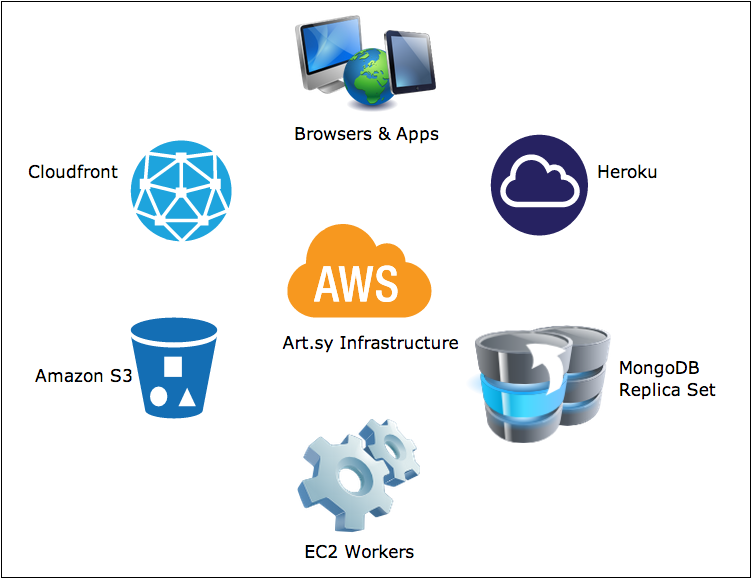

What you see when you go to Artsy is a website built with Backbone.js and written in CoffeeScript. It renders JSON data from Ruby on Rails, Ruby Grape and Node.js services. Text search is powered by Apache Solr. We also have an iOS application that talks to the same back-end Ruby API. We run all our web processes on Heroku and all job queues on Amazon EC2. Our data store is MongoDB, operated by MongoHQ and we have some Redis instances. Our assets, including images, are served from Amazon S3 via the CloudFront CDN. We heavily rely on Memcached Heroku addon and we use SendGrid and MailChimp to send e-mail. Systems are monitored by a combination of New Relic and Pingdom. All of this is built, tested and deployed with Jenkins.

In this post I’ll go in depth in our current system architecture and tell you the story about how these parts all came together.

Early Prototypes

Artsy early prototypes in 2010 consisted of a combination of PHP and Java web services running on JBoss and backed by a MySQL database. The system had more similarities with a large transactional banking application than a consumer website.

In early 2011 we rebooted the project on Ruby on Rails. RDBMS storage was replaced with NoSQL MongoDB. A video was recorded at MongoNYC 2012 that goes in depth into this specific choice.

Artsy Architecture Today

Having only a handful of engineers, our goal has always been to keep the number of moving parts to an absolute minimum. With a few new engineers we were able to expand things a bit.

Artsy Website Front-End

The Artsy website is a responsive Backbone.js application written in CoffeeScript and SASS and served from a Rails back-end. The generated JavaScript and CSS files are packaged and compressed with Jammit and deployed to Amazon S3. The Rails app itself is a traditional MVC system that bootstraps application data and mostly serves SEO needs, such as meta tags, escaped fragments and page titles. Once the basic data has been rendered though, Backbone routing takes over and you’re now navigating a client-side browser app with pushState support as available, swapping frames and rendering views using JST templates and JSON data returned from the API.

Core API

The website talks to the nervous system of Artsy, a RESTful API built in Ruby and Grape.

In the early days we did a ton of domain-driven design and spent a lot of time modeling concepts such as artist or artwork. The API has read and write behavior for all our domain concepts. Probably 70% of it is pure CRUD doing Mongoid queries with a layer of access control in CanCan and cache partitioning and binding using Garner.

Search Autocomplete

The first iteration of the website’s text search was powered by mongoid_fulltext. Today we run an Apache Solr leader-follower environment hosted on EC2.

Offline Indexes

The indexes that serve complex queries like related artists/artworks and filtered searches of artworks are all built offline. Our index-building system runs continuously, repeatedly pulling data from our production system to build the most out-of-date index. All of the most current indexes are imported back into production by a daily batch process and we swap the old indexes out atomically using mongoid_collection_snapshot.

One of such indexes a similarity graph that we query to produce most similar results on the website, other indexes serve filtering needs, etc. We run these processes nightly.

Admin Back-End and Partner CMS

The Artsy CMS and the Admin system are two newer projects and serve the needs of our partners and our internal back-end needs, respectively. These are built on a thin Node.js server that proxies requests to our API using node-http-proxy. They consist of a client-side Backbone.js application with assets packaged with nap. This is a lot like our website, but completely decoupled from the main Rails application and sharing the same technology for both client and server with CoffeeScript and Jade.

Folio Partner App

Artsy makes a free iOS application, called Folio, which lets our partners display their inventory at art fairs.

Folio is a native iOS implementation. The interface is heavily skinned UIKit with CoreData for storage. Our network code was originally a thin layer on top of NSURLConnection, but for our forthcoming update, we’ve rewritten it to use AFNetworking. We manage external dependencies with CocoaPods.

Want More Specifics? Have Questions?

We hope you find this useful and are happy to describe any aspect of our system on this blog. Please ask questions below, we’ll be happy to answer them.

Editor’s Note: This post has been updated as part of an effort to adopt more inclusive language across Artsy’s GitHub repositories and editorial content (RFC).

Comments